T-RLPM

Transformer-based Soft Actor–Critic for dynamic portfolio allocation.

Problem Definition

We model portfolio management as a Markov Decision Process where each state is a multi-asset history tensor (prices, indicators, temporal features) over a global stock pool and customizable pools are handled by masking excluded assets. An action is a weight vector over all assets plus a cash position. The environment transitions with market dynamics, and the reward is the change in portfolio value induced by the chosen allocation. The objective is to learn a policy that maximizes risk-adjusted return while respecting masks and cash, using transformer-based encoders within an RL framework.

T-RLPM is a transformer-based reinforcement learning framework for portfolio management that replaces shallow MLPs with attention encoders in both actor and critic to capture cross-asset and temporal structure.

Methodology

We encode each asset’s lookback features and masked tokens into a pooled sequence, then pass it through multi-head attention encoders to capture temporal and cross-asset structure. Masked tokens let the model handle any user-defined pool while keeping input size fixed. The policy uses Soft Actor-Critic with decoupled attention-based actor and critic networks that ingest the masked embeddings and optimize portfolio weights (including a learnable cash position) to maximize the incremental portfolio-value reward. In short, we fuse maskable CSP representations with transformer encoders and SAC to improve decision making while retaining one-shot training across pools.

Results

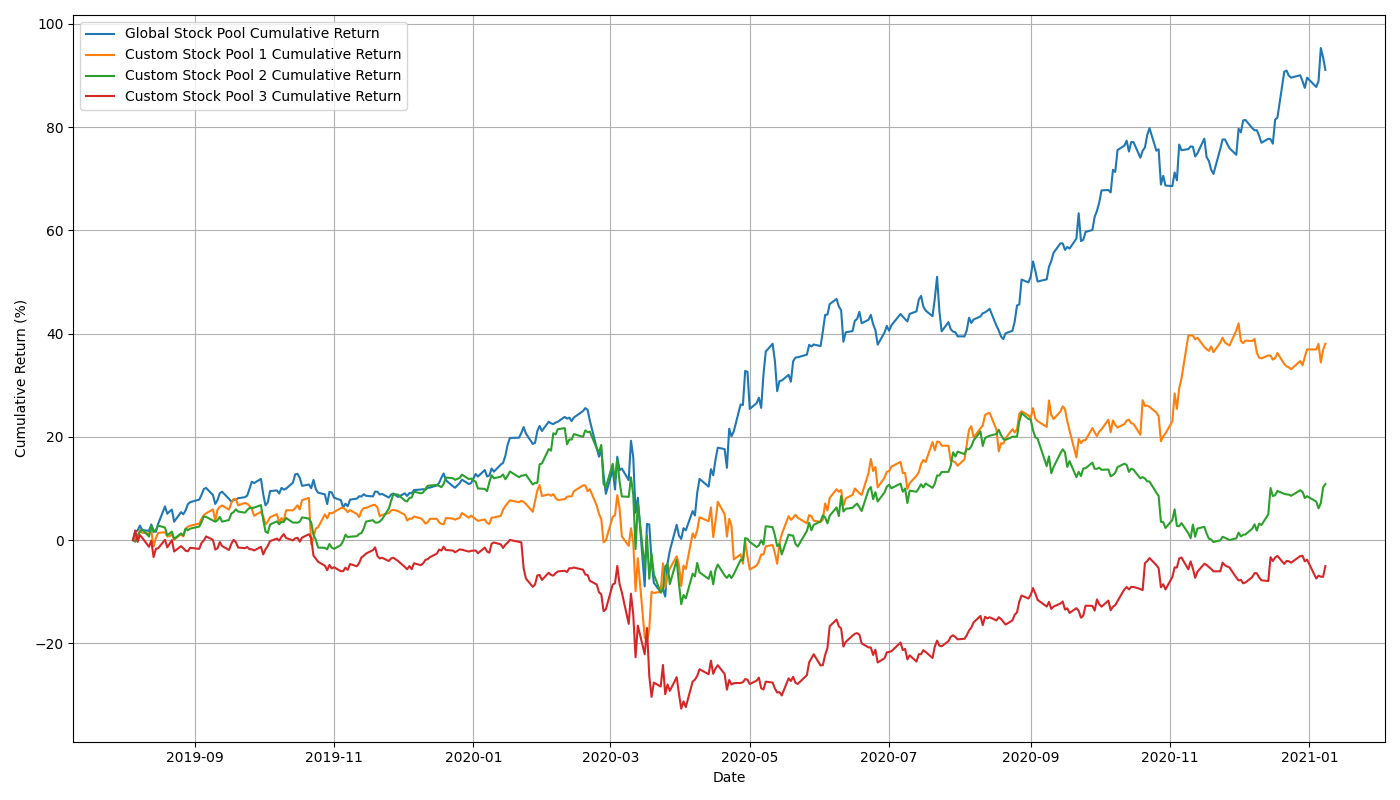

On the DJIA Global Stock Pool (GSP), T-RLPM achieves 59.87% ARR with the best risk-adjusted return among baselines. However, generalization to Customizable Stock Pools (CSPs) is a current challenge and focus of future work.